An Exploration to Enhance Human-machine Collaboration through Eye Gaze 2021, United Kingdom, London

An Exploration of Remote Human-machine Collaboration through Eye Gaze Using 3 Modes of Interactions

An exploration of a new method to enhance human-robot collaboration through eye gaze. Remote control of drone, spider robot, electronic curtain and an agent robot vehicle.

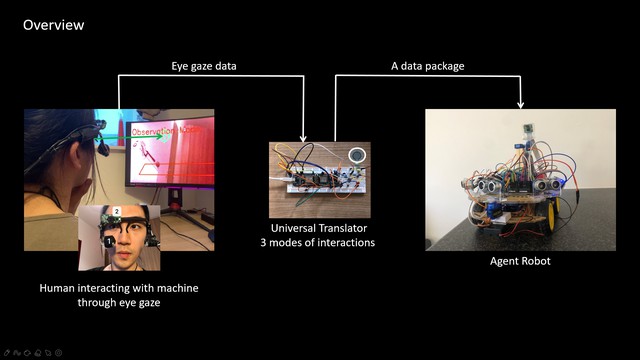

A new method has been explored to enhance human-machine collaboration. Using DIY eye-tracking glasses to collect eye gaze data in real world and package it in Universal Translator, which is a machine translator between eye gaze information and machine signals. Universal Translator would then send the data package, including other body message interaction instructions collected by the sensors on Universal Translator, to the Drone, Spider Robot, electronic curtain and Agent Robot Vehicle. These machines would move to the positions where eyes are looking at in real world according to the eye gaze. What's more, in order to tackle the two potential problems that I found about eye-tracking technology in the process of experiments, I designed three different modes for Agent Robot Vehicle, they are respectively Pure Eye Control Mode, Line Track Mode and Observation Mode, in these modes, Agent Robot Vehicle can avoid obstacles automatically, meanwhile track the line on the ground and even choose one direction to track when there are more than one line on the ground according to user's eye gaze, in addition, it can stop all movements and allows camera mounted on the robot controlled remotely by eye gaze so that it can rotate exactly the same with user's pupils and users are able to observe surroundings in a broaden view.

Details

Team members : Zikun Quan, developed as part of Body as Interface | City as Interface studio,MSc Architectural Computation, Bartlett School of Architecture, University College London

Supervisor : Associate Professor: Ava Fatah gen Schieck

Institution : Bartlett School of Architecture, University college London

Descriptions

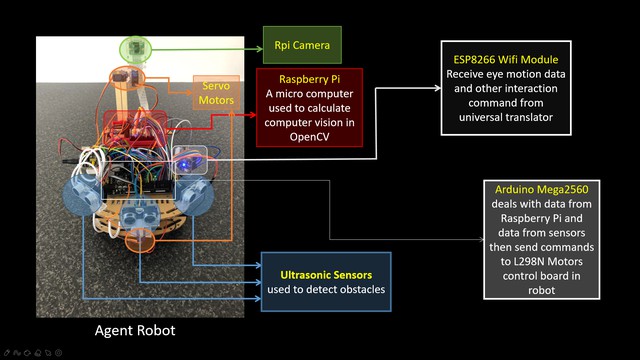

Technical Concept : OpenCV in PC is used to deal with videos of eye motions captured by eye-tracking glasses and calculate data to get the position where eyes are looking at in real world. OpenCV in Raspberry Pi is used to calculate the computer vision captured by camera mounted on the Agent Robot and send processed data to Arduino to enable robot to track the line and choose direction according to user's eye gaze. Arduino is used to deal with data collected by sensors mounted on the robot and control robot's movements. Universal Translator can get the eye gaze data from PC through serial communication and send the data package, including other interaction instructions collected by sensors on it, to Wifi module on Agent Robot through wifi.

Visual Concept : Users can see what Agent Robot sees in the camera through the computer screen real time and use eye gaze to involve in the collaboration with the agent robot and environment. In the observation mode, camera mounted on the Agent Robot can rotate exactly same with user’s pupil motions so that user can use eyes to search for a boarder view in the computer screen.

Credits

Zikun Quan, developed as part of Body as Interface | City as Interface studio,MSc Architectural Computation, Bartlett School of Architecture, University College London

Zikun Quan, developed as part of Body as Interface | City as Interface studio,MSc Architectural Computation, Bartlett School of Architecture, University College London

Zikun Quan, developed as part of Body as Interface | City as Interface studio,MSc Architectural Computation, Bartlett School of Architecture, University College London

Zikun Quan, developed as part of Body as Interface | City as Interface studio,MSc Architectural Computation, Bartlett School of Architecture, University College London

Zikun Quan, developed as part of Body as Interface | City as Interface studio,MSc Architectural Computation, Bartlett School of Architecture, University College London. Background music: Mumbai, from Royalty free music and sound effects| Epidemic Sound